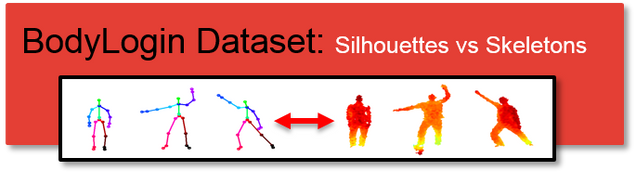

BodyLogin Dataset: Silhouettes vs Skeletons

| Description | Data Acquisition | Data Definitions | Download Form | Contact |

|---|---|---|---|---|

Description

BodyLogin Dataset : Silhouettes vs Skeletons (BLD-SS) – To be presented at AVSS 2014

BLD-SS is a Kinect dataset that contains gestures recorded under various degradations. Data is provided in two forms: unprocessed skeletal joint coordinates and depth maps. This dataset was used to compare the performance of silhouette and skeletal features in “real-world” scenarios.

- S gesture: drawing an “S” shape with both hands. Although simplistic, this gesture is shared by all the users (harder for authentication).

- User-defined gesture: the user chooses his/her own gesture with no instruction. Although potentially complex, this gesture is unique for most users (easier for authentication).

Data Acquisition

Each user gesture was captured using a forward-facing Kinect for Windows camera (version 1) approximately 2 meters away. The Kinect camera captured a 640×480 depth image and skeletal joint coordinates at 30 fps. All data was captured using the official Microsoft Kinect SDK.

Four different types of gesture acquisition scenarios were enacted to capture different types of real-world degradations: no degradations, personal effects, user memory, and gesture reproducibility.

- Personal effects: In the case of personal effects, users either wore or carried something when performing a gesture. Half of the users were told to wear heavier clothing, and the other half were told to carry some type of a bag.

Users wore a variety of heavier clothing: sweatshirts, windbreakers, and jackets.

They carried backpacks (either on a single shoulder or both), messenger bags, and purses.

- User memory and gesture reproducibility: The impact of user memory was tested by collecting samples a week after a user first performed a gesture. Users were first asked to perform the gesture without any video or text prompt. After a few samples were recorded, the user was shown a prompt and asked to perform the gesture again. These last samples measure reproducibility.

Of the 20 samples recorded for each gesture, each of the described scenarios has 5 samples recorded. The following table summarizes the degradation scenarios that were used for each gesture.

| Session ID | S gesture | User-defined gesture |

|---|---|---|

| Session I | 1. Observe video and text description of gesture 2. No degradation: Perform gesture normally (5 times) 3. Personal effects: Wear a coat or carry a bag. 4. Perform gesture with personal effect (5 times) |

1. Create a custom gesture 2. No degradation: Perform gesture normally (5 times) 3. Personal effects: Wear a coat or carry a bag. 4. Perform gesture with personal effect (5 times) |

| Session II ( a week after) | 1. Memory: Perform gesture from memory (5 times) 2. Observe video and text description of gesture 3. Reproducibility: Perform gesture (5 times) |

1. Memory: Perform gesture from memory (5 times) 2. Observe video of prior performance from session I 3. Reproducibility: Perform gesture (5 times) |

Data Definitions

Data is provided as both depth-maps and unprocessed skeletal joint coordinates (from the Kinect SDK). Depth-map data is provided as large zipped .png files. Skeletal joint coordinates are provided as .mat files. Sample MATLAB code is provided in a .zip file to help with initializing and visualizing both forms of data, and to extract silhouettes from the depth data (segmentation). Each gesture has it’s own .mat and .zip file. Index definitions used in the sample script can be found later in this section. The download form can be accessed directly here: Download Form.

The data provided in this data-set complements and partially overlaps with the data in BodyLogin Dataset: Multivew (BLD-M). Specifically, this dataset adds depth map data pertaining to the center camera.

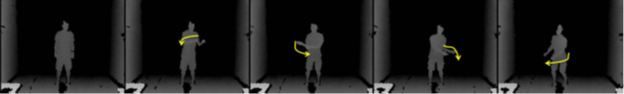

If used correctly, the function sample.m provided will extract two gesture samples from the data-set and display them. The following videos illustrate the output that it should yield.

For the cell data structure data, we can access a specific gesture as follows:

data{subject_id,session_id,sample_id, 3}

The last index of the data structure represents viewpoint. For the purpose of this dataset, this index is always fixed to be 3 (center-forward facing viewpoint).

ID definitions are provided for the rest of the indices in the following tables.

User-defined Gesture Descriptions

| Subject ID | Description | Subject ID | Description |

|---|---|---|---|

| 1 | Double armed backstrokes | 21 | Shoot basketball |

| 2 | Y of Y-M-C-A | 22 | Parallel arms forward |

| 3 | Backwards jump-rope | 23 | Muscular pose |

| 4 | Backhand Tennis Swing | 24 | Wipe away motion |

| 5 | X-pose | 25 | Kamehameha |

| 6 | Upper body stretch | 26 | Upwards pose |

| 7 | Upper meditation | 27 | Salute |

| 8 | Halfway spin | 28 | Rockstar |

| 9 | Left arm chopping | 29 | Double clap |

| 10 | Long swimming frontcrawl | 30 | Stretches |

| 11 | Crouch forward swim | 31 | Taichi pose |

| 12 | Left-right body tilt | 32 | Taichi stretches |

| 13 | Arm-to-head stretch | 33 | Double wave |

| 14 | Balance-something | 34 | Stretch leg up |

| 15 | Chopping action | 35 | Jump pose |

| 16 | Dribble | 36 | Golf swing |

| 17 | Assorted poses | 37 | Shake imaginary maracas |

| 18 | Upper stretches | 38 | Y of Y-M-C-A (duplicate) |

| 19 | Upper flutter | 39 | Slow wave |

| 20 | Stretch and bend | 40 | Double hand stretch left right |

Gesture Type

| Session ID | Sample ID | Description |

|---|---|---|

| 1 | [1 – 5] | No Degradations |

| 1 | [6 – 10] | Personal Effects |

| 2 | [1 – 5] | User Memory |

| 2 | [6 – 10] | Gesture Reproducibility |

Personal Effects Listing

For samples involving personal effects, users were assigned to either hold an object or wear clothing. The assignments are shown as follows.

| Personal Effect | Subject IDs with Effect |

|---|---|

| Holding Object | [1 – 7], 10, 15, 19, 22, 23, 25, [27 – 29], 34, 36, 38, 40 |

| Clothing | 8,9, [11 – 14], [16-18], 20, 21, 24, 26, [30-33], 35, 37, 39 |

Missing Data

The following data is unavailable and will appear as an empty matrix in the cell array.

| Gesture | Subject ID | Session ID | Sample ID |

|---|---|---|---|

| User Defined | 18 | 2 | 10 |

| User Defined | 37 | 1 | [7 – 10] |

Off-labeled Data

The following data is available for use in the data-set, but conflicts with prior labels.

| Gesture | Subject ID | Session ID | Sample ID | Comment |

|---|---|---|---|---|

| S | 25 | 1 | [6 – 10] | No Personal Effects |

| S | 38 | 1 | [6 – 10] | No Personal Effects |

Download Form

You may use this dataset (BLD-SS) for non-commerical purposes. If you publish any work reporting results using this dataset, please cite the following work:

- J. Wu, P. Ishwar, and J. Konrad, “Silhouettes versus Skeletons in Gesture-Based Authentication with Kinect,” in Proc. IEEE Int. Conf. Advanced Video and Signal-Based Surveillance (AVSS), Aug. 2014.

To access the download page, please complete the following form. When ALL the fields have been filled, a submit button will appear which will redirect you to the download page.

Contact

Please contact [jonwu] at [bu] dot [edu] if you have any questions.

Acknowledgements

This dataset was acquired within a project supported by the National Science Foundation under CNS-1228869 grant.

We would like to thank the students of Boston University for their participation in the recording of our dataset. We would also like to thank Luke Sorenson and Lucas Liang for their significant contribution to the data gathering and tabulation processes.