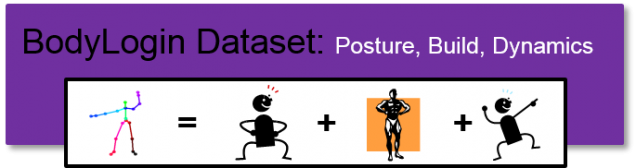

BodyLogin Dataset: Posture, Build and Dynamics

| Description | Data Acquisition | Data Definitions | Download Form | Contact |

|---|---|---|---|---|

Description

BodyLogin Dataset: Posture, Build and Dynamics (BLD-PBD) – To be presented at IJCB 2014

BLD-PBD is a Kinect dataset that contains gestures of increasing difficultly. These gestures are recorded across two sessions. In the first session, users record gestures naturally, whilst in the second session, users record gestures with the intention of forging another’s gesture (spoof attacks). Data is provided as unprocessed skeletal joint coordinates. This dataset was used to evaluate the value of posture, build, and dynamics in gesture-based authentication as well as evaluating spoof attacks.

The BodyLogin Dataset: Posture, Build, and Dynamics (BLD-PBD) contains 3 gesture types performed by 36 different college-affiliated users (25 men and 11 women of ages that are primarily in the range 18-33 years). Each subject was asked to perform 3 unique short gestures, each approximately 3 seconds long, each with 20 samples. Gestures were designed to be of increasing complexity (left-right, double-handed arch, and balancing are intended to be the easiest to hardest, respectively), and involved movement in both the upper and lower body.

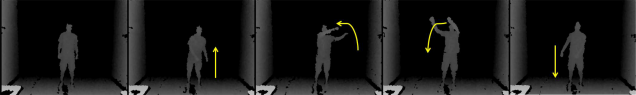

- Left-right gesture: user reaches right shoulder with left hand, and then reaches left shoulder with right hand.

- Double-handed arch gesture: user draws an arch from left to right with both hands,.

- Balancing gesture: user first raises right arm forward while pulling left arm back, then balances by forward sweeping left leg while simultaneously tucking left arm in and bringing right arm to rest.

Data Acquisition

Each user gesture was captured using a forward-facing Kinect for Windows camera (version 1) approximately 2 meters away. The camera captured a 640×480 depth image and skeletal joint coordinates at 30 fps. All data were captured using the official Microsoft Kinect SDK.

Gesture samples were collected in two sessions that were separated by one week. In the first session, each user was instructed how to perform each gesture through a text and video prompt (a multi-modal instruction scheme). In the literature, a multi-modal instruction scheme is known to improve gesture reproducibility over a single-mode instruction scheme (e.g., text or video only).

In our second session, each of the 3 gestures of each user was matched to an attack target after the first session. Attack targets were found by comparing the “centroid” samples of each user to one another (please refer to our referred paper for details). These attack target assignments are also provided in this dataset.

Data Definitions

Data is provided in the form of unprocessed skeletal joint coordinates. BLD-PBD provides 5 files (4 MATLAB .mat files, and a .zip file). Gesture sequences (unprocessed skeletal joint coordinates) are stored in the .mat files and can be accessed using specific indexing of the cell data structure. Each gesture has it’s own .mat file. We have also provided a .zip file with helper functions that visualize the skeletal sequences, as well as a sample script that shows how to extract and display a gesture. The download form can be accessed directly here: Download Form.

If used correctly, the function sample.m provided will extract all gesture samples from the data-set and display them. The following video shows part of the output the sample will yield:

For the cell data structure data, we can access a specific gesture as follows:

data{subject_id,session_id,sample_id}

ID definitions are provided in the following tables.

Gesture Type

| Session ID | Sample ID | Description |

|---|---|---|

| 1 | * | No Degradations |

| 2 | * | Attacking matched user |

Missing Data

The following data is unavailable and will appear as an empty matrix in the cell array.

| Gesture | Subject ID | Session ID | Sample ID |

|---|---|---|---|

| Balancing | 21 | 1 | 10 |

Download Form

You may use this dataset (BLD-PBD) for non-commercial purposes. If you publish any work reporting results using this dataset, please cite the following paper:

- J. Wu, P. Ishwar, and J. Konrad, “The Value of Posture, Build and Dynamics in Gesture-Based User Authentication,” in Proc. IEEE International Joint Conference on Biometrics (IJCB), Oct. 2014.

To access the download page, please complete the following form. When ALL the fields have been filled, a submit button will appear which will redirect you to the download page.

Contact

Please contact [jonwu] at [bu] dot [edu] if you have any questions.

Acknowledgements

This dataset was acquired within a project supported by the National Science Foundation under CNS-1228869 grant.

We would like to thank the students of Boston University for their participation in the recording of our dataset. We would also like to thank Luke Sorenson and Lucas Liang for their significant contribution to the data gathering and tabulation processes.