HandLogin Dataset

![]()

| Motivation | Description | Download Form | Contact |

|---|---|---|---|

Motivation

Depth-sensors, such as the Kinect, have predominately been used as a gesture recognition device. Recent works, however, have proposed using these sensors for user authentication using biometric modalities such as: face, speech, gait and gesture. The last of these modalities – gestures, used in the context of full-body and hand-based gestures, is relatively new, but has shown promising authentication performance.

This dataset focuses on evaluating depth images captured by a Kinect v2 sensor for user authentication.

Description

The HandLogin dataset contains 4 gesture types performed by 21 different college-affiliated users. Each subject was asked to perform all 4 unique gestures (all designed to be a few seconds long), each with 10 samples.

A Kinect v2, a time-of-flight depth sensor, was used to acquire a 512×424 depth image of each gesture sample at 30 fps. Each gesture sample was recorded in near proximity to an upwards-facing sensor (approximately 50 cm) so as to maximize hand resolution. Each user was asked to perform 4 unique types of hand-gestures, each type designed to be a few seconds in duration. Ten samples of each gesture type were recorded, with users instructed to leave and re-enter the recording area to reduce arm- and hand-pose biases between samples. Further, users were instructed using text and video since it is known that using both improves gesture reproducibility compared to the instructions with only one (e.g., either text or video [S Fothergill CHI ACM 2012]).

All 4 gestures were performed with the right hand starting in a “rest” position: the hand extended downwards on top of a ceiling-facing Kinect sensor, with fingers spread comfortably apart. The orientation of our sensor was designed to mimic an authentication terminal, where typically only a single user is visible.

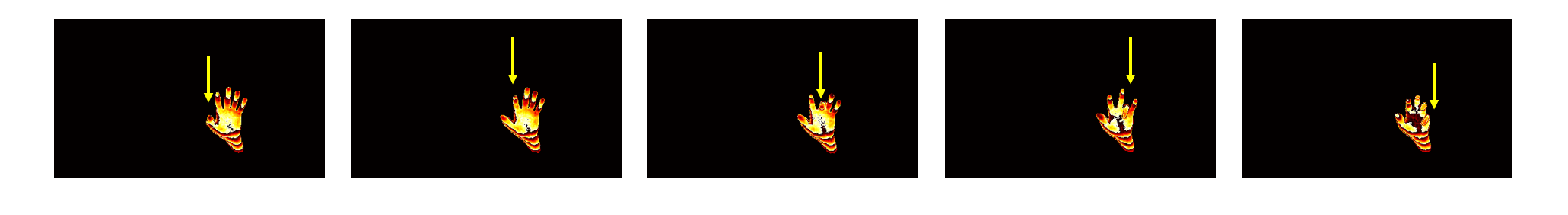

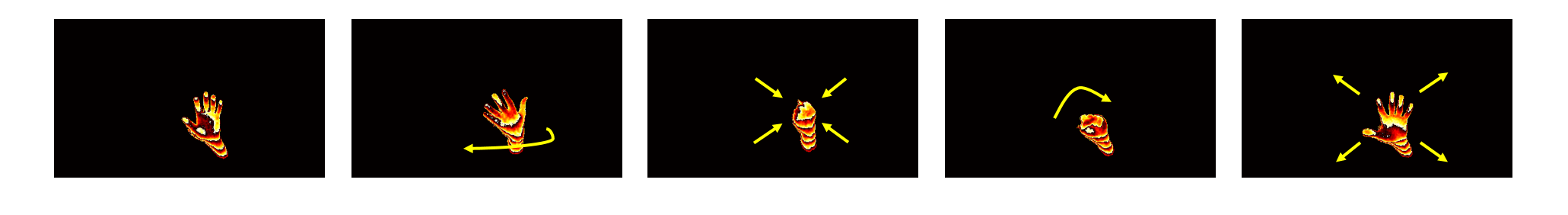

The following gestures were recorded (only lower 4 bits of depth are shown for visualization):

- Piano: users use their fingers to “press” the keys of an imaginary keyboard – fingers are pressed one-by-one starting from the thumb and ending with the pinky; this gesture evaluates subtle fingertip movements.

- Push: users pull the arm back, and push towards the sensor; this gesture evaluates translation towards the camera (depth change).

- Flipping Fist: users first flip the hand over and close it into a fist, and then flip the fist over and open it back to the starting hand pose in front of the sensor; this gesture evaluates the effect of occlusions and more sophisticated fingertip motion.

The dataset is provided as raw depth .png images from multiple compressed .zip files. For easier downloading, we have broken our data up into chunks by groups of users.

As the Kinect v2 records 16-bit depth information, we have saved each frame as two 8-bit images corresponding to the LSB (lower 8 least-significant-bits), and MSB (upper 8 most-significant-bits).

The folder structure to get all the frames of a particular gesture sample is as follows:

[user num]\[gesture name]\Test[sample num]\[LSB or MSB]

Important Note: “Flipping Fist” is represented as “ACDO” in these files. In addition, the first frame of each sequence (001.png) is the background frame of the gesture sequence (to be used for background subtraction and segmenting user hand).

For example, if one were interested in user 11, push gesture, sample 1, least significant bits, one would look in the folder:

11\Push\Test001\LSB

For ease of use, we provide MATLAB code to visualize, load, segment, and extract spatio-temporal covariance features from the dataset.

Known issues: user 20 has no samples of the “Flipping Fist” gesture.

For additional details (features, benchmarks, etc.), please refer to the paper listed below.

Download Form

You may use this dataset for non-commercial purposes. If you publish any work reporting results using this dataset, please cite the following paper:

J. Wu, J. Christianson, J. Konrad, and P. Ishwar, “Leveraging Shape and Depth in User Authentication from In-Air Hand Gestures,” in Proc. IEEE Conference on Image Processing (ICIP), Sept. 2015.

To access the download page, please complete the following form.

When ALL the fields have been filled, a submit button will appear which will redirect you to the download page.

Contact

Please contact [jonwu] at [bu] dot [edu] if you have any questions.

Acknowledgements

The development of this dataset was supported by the NSF under award CNS-1228869 and by Co-op Agreement No. EEC-0812056 from the ERCP, and by BU UROP.

We would also like to thank the students of Boston University for their participation in the recording of our dataset.